Note: Before proceeding, a great recap of probability concepts can be found here, written by Paul Rossman.

But First, Conditional Probability

When I teach conditional probability, I tell my students to pay close attention to the vertical line in the formula above. Whenever they see it, they must imagine the loud baritone behind-the-scenes announcer voice from Bill Nye saying, “GIVEN!”

This symbol | always indicates we assume the event that follows it has already occurred. The formula above, then, should be read: The probability event A will occur given event B has already occurred.

A simple example of conditional probability uses the ubiquitous deck of cards. From a standard deck of 52, what is the probability you draw an ace on the second draw if you know an ace has already been drawn (and left out of the deck) on the first draw?

Since a deck of 52 playing cards contains 4 aces, the probability of drawing the first ace is 4/52. But the probability of drawing an ace given the first card drawn was an ace is 3/51 — 3 aces left in the deck with 51 total cards remaining. Hence, conditional probability assumes another event has already taken place.

False Positives and False Negatives: What They’re Not

Tests are flawed.

According to MedicineNet, a rapid strep test from your doctor or urgent care has a 2% false positive rate. This means 2% of patients who do not actually have Group A streptococcus bacteria present in their mouth test positive for the bacteria. The rapid strep test also indicates a negative result in patients who do have the bacteria 5% of the time — a false negative.

Another way to look at it: The 2% “false positive” result indicates the test displays a true positive in 98% of patients. The 5% “false negative” result means the test displays a true negative in 95% of patients.

It’s common to hear these false positive/true positive results incorrectly interpreted. These rates do not mean the patient who tests positive for a rapid strep test has a 98% likelihood of having the bacteria and a 2% likelihood of not having it. And a negative result does not indicate one still has a 5% chance of having the bacteria.

Even more confusing, but important is the idea that while a 2% false positive does indicate that 2% of patients who do not have strep test positive, it does not mean that of all positives, 2% do have strep. There is more to consider in calculating those kinds of probabilities. Specifically, we would need to know how pervasive strep is for that population in order to come close to the actual probability that someone testing positive has the bacteria.

Enter: Bayes’ Theorem

Bayes’ Theorem considers both the population’s probability of contracting the bacteria and the false positives/negatives.

I know, I know — that formula looks INSANE. So I’ll start simple and gradually build to applying the formula – soon you’ll realize it’s not too bad.

Example: Drug Testing

Many employers require prospective employees to take a drug test. A positive result on this test indicates that the prospective employee uses illegal drugs. However, not all people who test positive actually use drugs. For this example, suppose that 4% of prospective employees use drugs, the false positive rate is 5%, and the false negative rate is 10%.

Here we’ve been given 3 key pieces of information:

- The prevalence of drug use among these prospective employees, which is given as a probability of 4% (or 0.04). We can use the complement rule to find the probability an employee doesn’t use drugs: 1 – 0.04 = 0.96.

- The probability a prospective employee tests positive when they did not, in fact, take drugs — the false positive rate — which is 5% (or 0.05).

- The probability a prospective employee tests negative when they did, in fact, take drugs — the false negative rate — which is 10% (or 0.10).

It’s helpful to step back and consider the two things are happening here: First, the prospective employee either takes drugs, or they don’t. Then, they are given a drug test and either test positive, or they don’t.

I recommend a visual guide for these types of problems. A tree diagram helps you take these two pieces of information and logically draw out the unique possibilities.

Tree diagrams are also helpful to show us where to apply the multiplication principle in probability. For example, to find the probability a prospective employee didn’t take drugs and tests positive, we multiply P(no drugs) * P(positive) = (.96)*(.05) = 0.048.

An important note: The probability of selecting a potential employee who did not take drugs and tests negative is not the same as the probability an employee tests negative GIVEN they did not take drugs. In the former, we don’t know if they took drugs or not; in the latter, we know they did not take drugs – the “given” language indicates this prior knowledge/evidence.

What’s the probability someone tests positive?

We can also use the tree diagram to calculate the probability a potential employee tests positive for drugs.

A potential employee could test positive when they took drugs OR when they didn’t take drugs. To find the probabilities separately, multiply down their respective tree diagram branches:

Using probability rules, “OR” indicates you must add something together. Since one could test positive in two different ways, just add them together after you calculate the probabilities separately:

P(positive) = 0.048 + 0.036 = 0.084

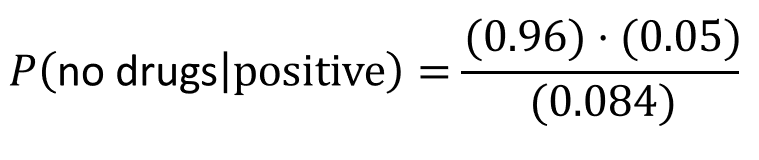

Given a positive result, what is the probability a person doesn’t take drugs?

Which brings us to Bayes’ Theorem:

Let’s find all of the pieces:

- P(positive | no drugs) is merely the probability of a false positive = 0.05

- P(no drugs) = 0.96

- So we already calculated the numerator above when we multiplied 0.05*0.96 = 0.048

- We also calculated the denominator: P(positive) = 0.084

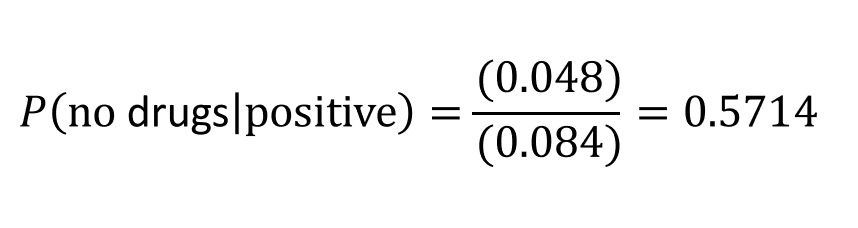

which simplifies to

Whoa.

This means, if we know a potential employee tested positive for drug use, there is a 57.14% probability they don’t actually take drugs — which is MUCH HIGHER than the false positive rate of 0.05. In other words, if a potential employee (in this population with 4% drug use) tests positive for drug use, the probability they don’t take drugs is 57.14%

How is that different from a false positive? A false positive says, “We know this person doesn’t take drugs, but the probability they will test positive for drug use is 5%.” While if we know they tested positive, the probability they don’t take drugs is 57%.

Why is this probability so large? It doesn’t seem possible! Yet, it takes into account the likelihood a person in the population takes drugs, which is only 4%.

In math terms:

P(positive | no drugs) = 0.05 while P(no drugs | positive) = 0.5714

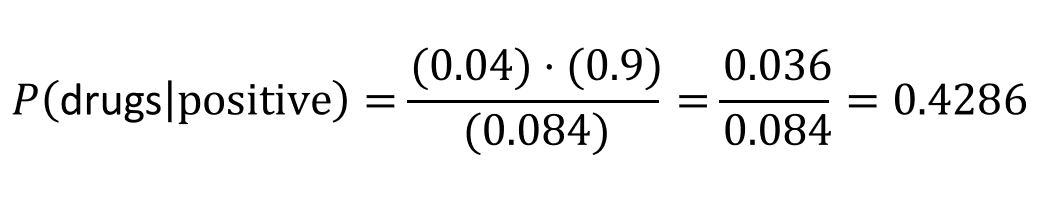

Which also means that if a potential employee tests positive, the probability they do indeed take drugs is lower than what you might think. You can find this probability by taking the complement of the last calculation: 1 – 0.5714 = 0.4286. OR, recalculate using the formula:

Now You Try: #DataQuiz

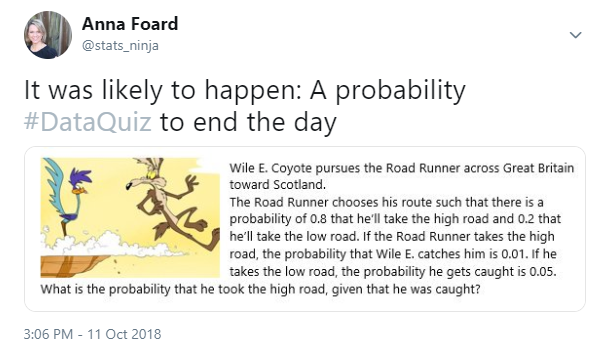

Back in October I posted a #DataQuiz to Twitter, with a Bayesian twist. Can you calculate the answer using this tutorial without looking at the answer (in tweet comments)?

Hints:

- Draw out the situation using a tree diagram

- What happens first? What happens second?

- What is “given”?

Next Up: Business Applications

Stay tuned! Paul Rossman has a follow-up post that I’ll link to when it’s ready. He’s got some brilliant use case scenarios with application in Tableau.

Very clear, thanks. Except in the xkcd image posted, Randall Munroe got Bayes’s rule wrong, inverting P(I picked up a seashell) and P(I’m near the ocean). But the link is to his corrected version.

LikeLike